The Importance of AI Red Teaming

The fast growth of generative AI systems makes it crucial to ensure their safety and security. AI red teaming helps evaluate these technologies by simulating real-world attacks. However, current methods struggle with effectiveness and implementation due to the complexity of modern AI systems.

Challenges in AI Security

Modern AI systems have many capabilities, which create numerous potential vulnerabilities. The use of advanced AI models with high privileges increases the risk of security breaches. Current security methods often miss important system-level vulnerabilities, focusing mainly on model-level risks.

Emerging Threats

AI systems using retrieval augmented generation (RAG) can be manipulated through hidden malicious instructions, leading to data theft. Although some defensive techniques exist, they do not fully eliminate risks due to the inherent limitations of language models.

Microsoft’s Comprehensive Framework

Researchers from Microsoft have developed a robust framework for AI red teaming based on their experience with over 100 generative AI products. This framework introduces a structured approach to identifying and evaluating security risks in AI systems.

Key Features of the Framework

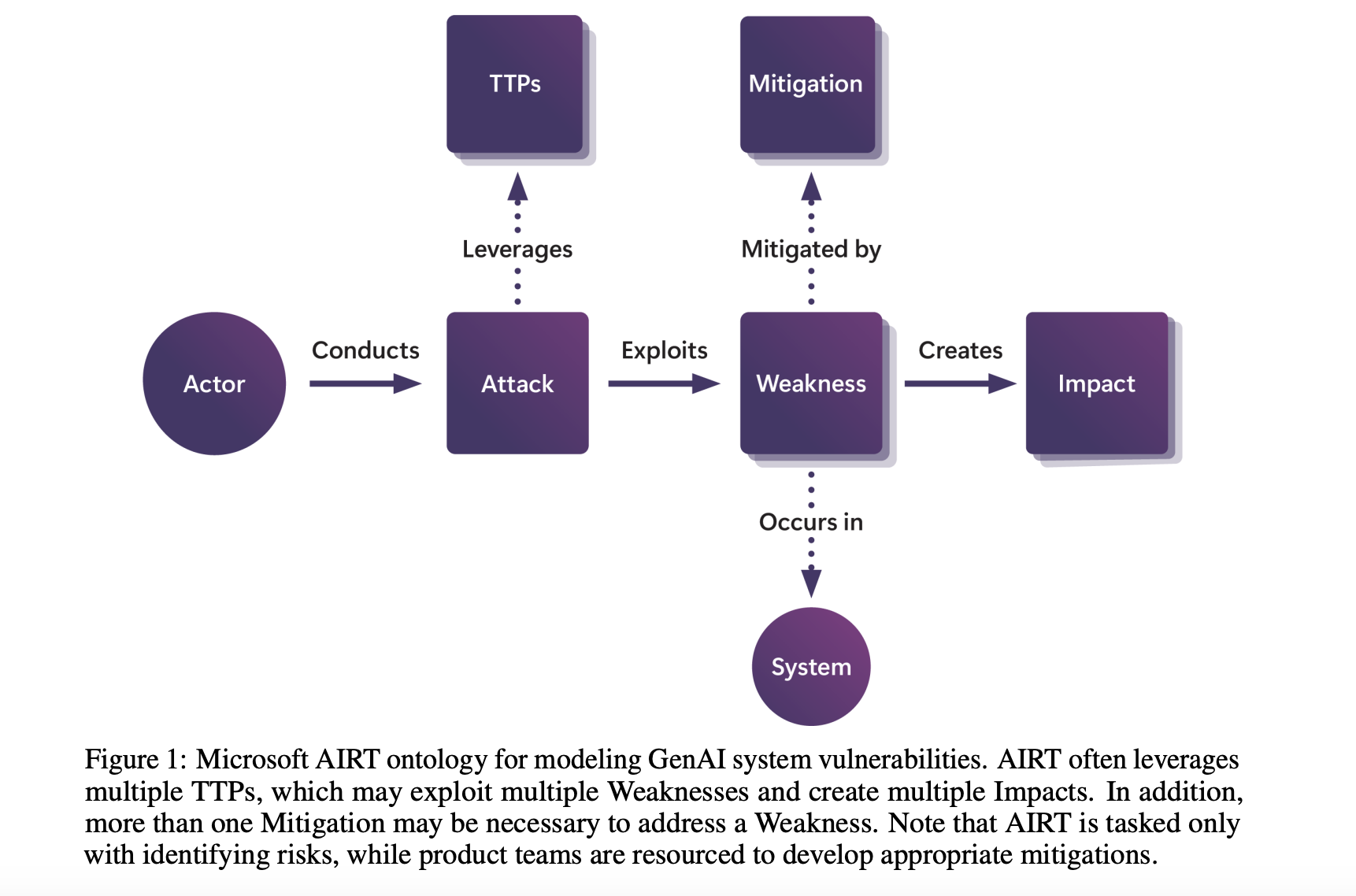

- **Structured Threat Model Ontology**: Systematically identifies traditional and emerging security threats.

- **Eight Key Lessons**: Offers insights from real-world operations to enhance security testing.

- **Dual-Focus Approach**: Targets both standalone AI models and integrated systems.

Operational Architecture

The framework distinguishes between cloud-hosted models and complex systems that use these models in applications. It addresses traditional security concerns like data theft while also tackling AI-specific vulnerabilities.

Effectiveness of the Framework

Microsoft’s framework has proven effective through analysis of attack methods. Findings show that simpler attack techniques can be just as effective as complex ones, emphasizing the need for a holistic security approach that considers all vulnerabilities.

Conclusion

Microsoft’s framework for AI red teaming offers valuable insights for organizations looking to improve their AI security. By combining structured threat modeling with practical lessons, it provides a strong foundation for developing effective risk assessment protocols.

Next Steps

Explore the paper for more details. For companies looking to leverage AI, consider the following:

- **Identify Automation Opportunities**: Find key areas for AI implementation.

- **Define KPIs**: Measure the impact of AI on business outcomes.

- **Select an AI Solution**: Choose tools that fit your needs.

- **Implement Gradually**: Start small, gather data, and expand wisely.

For AI KPI management advice, contact us at hello@itinai.com. Stay updated on leveraging AI through our Telegram channel t.me/itinainews or Twitter @itinaicom.

Discover how AI can transform your sales processes and customer engagement at itinai.com.