Understanding Retrieval-Augmented Generation (RAG) Systems

Retrieval-augmented generation (RAG) systems combine retrieving information and generating responses to tackle complex questions. This method provides answers with more context and insights compared to models that only generate responses. RAG systems are particularly valuable in fields like legal research and academic analysis, where a wide knowledge base is essential.

Benefits of RAG Systems

- Enhanced Context: RAG models assemble targeted data into comprehensive answers.

- Diverse Perspectives: They offer multiple viewpoints, essential for in-depth understanding.

Evaluating RAG System Performance

Assessing RAG systems is challenging due to their need to answer complex, multi-layered questions. Traditional evaluation methods often overlook the depth required to address these inquiries. Current tools focus on surface-level metrics, failing to capture the completeness of responses.

Common Shortcomings

- Limited Coverage: Many RAG systems only partially address user needs.

- Inadequate Detail: Responses often lack essential background and follow-up information.

New Evaluation Framework from Researchers

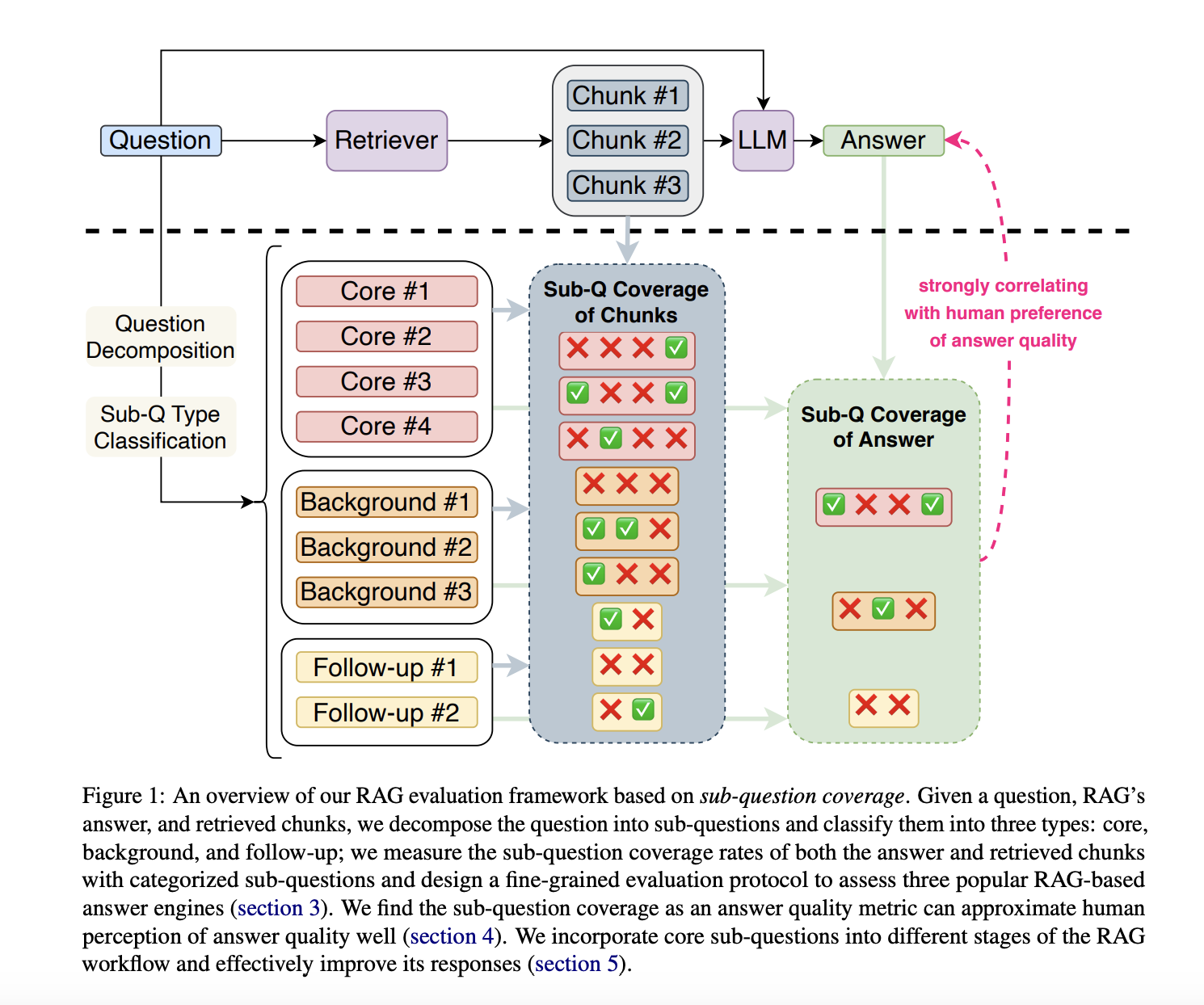

Researchers from Georgia Institute of Technology and Salesforce AI Research have developed a new evaluation method focusing on “sub-question coverage.” This framework breaks down complex questions into core, background, and follow-up sub-questions, allowing for a more detailed assessment of response quality.

Two-Step Evaluation Method

- Decomposition: Break down questions into sub-questions categorized by importance.

- Testing: Assess how well RAG systems retrieve content for each sub-question type.

Key Findings from the Evaluation

The study revealed significant trends in RAG systems’ performance, highlighting both strengths and weaknesses:

- Core Sub-question Coverage: RAG systems missed about 50% of core sub-questions.

- System Accuracy: Perplexity AI scored highest with 71% accuracy in connecting content to responses.

- Background Information Gap: Coverage of background sub-questions was low, between 14% and 20%.

- Performance Rankings: Perplexity AI ranked highest overall, with Bing Chat excelling in structuring responses.

- Improvement Potential: All systems showed room for growth in core sub-question retrieval.

Conclusion and Future Steps

This research redefines how RAG systems are evaluated, emphasizing the importance of sub-question coverage. By focusing on specific sub-question types, the study identifies limitations and offers pathways for enhancing response quality. The findings suggest practical improvements that can make RAG systems more effective for complex tasks.

Take Action with AI

To stay competitive and leverage AI effectively:

- Identify Automation Opportunities: Find key customer interactions that can benefit from AI.

- Define KPIs: Ensure measurable impacts from your AI initiatives.

- Select AI Solutions: Choose tools that meet your specific needs.

- Implement Gradually: Start small, gather data, and expand your AI usage wisely.

For more insights or to discuss AI KPI management, contact us at hello@itinai.com. Follow us on Twitter, join our Telegram Channel, or check out our website for more information.